What is Algorithm Engineering?

Algorithm Engineering — L01

Welcome

Who are we?

Dr. Phillip Keldenich

- Over a decade of experience in algorithm engineering research.

- Heinrich-Büssing-Preis winner

- Academic, deep view.

- His son knows more about excavators than you ever will.

Dr. Dominik Krupke

- Over a decade of experience in algorithm engineering research.

- Over two years of experience in consulting.

- Author of The CP-SAT Primer.

- Applied, broad view.

Why Algorithm Engineering?

Shift Scheduling and Task Assignments

Logistics

Energy Networks

Production Planning

Car Sequencing

Cars move on a conveyor past all stations at a fixed pace.

The line does not stop — each station must finish before the next car arrives.

A → A → B → ...

Option Sunroof: high workload → Too many in a row and workers fall behind

A → B → A → B → A → ...

Better for sunroof — but now Tow bar is overloaded (e.g., ≤ 1 in 3)

Each option imposes a pattern constraint:

- “At most 2 in 5”

- “At most 1 in 3”

- “At most 3 in 7”

Find the most efficient sequence that satisfies all of them.

Same algorithm, different uses

The same optimization algorithm can be used in different ways:

- Operational use — compute concrete plans and update them as reality changes

- Feasibility & understanding — check what is possible under given constraints

- Exploration — test changes to the system and compare outcomes

What is Algorithm Engineering?

Nearly all of these problems are NP-hard. Yet industry solves them every day.

This course teaches you how — and why it works.

What to expect

The Machine

- CPU architecture & caches

- Data structures & algorithms

- Performance engineering

The Toolkit

- MIP, SAT, CP (general-purpose solvers)

- Graph algorithms

- Decomposition & metaheuristics

The Real World

- Robustness & uncertainty

- Benchmarking methodology

- Testing & software engineering

Prerequisites: Coding, complexity theory, fundamental algorithms & data structures.

Performance Engineering

Your algorithms course taught you Big-O. That’s not enough.

How fast can you multiply two matrices?

This takes 7 hours. How much room for optimization is there?

60,000×. Down to 0.41 seconds. Same machine. Same result.

How do we get there?

| Version | Implementation | Time (s) | Speedup |

|---|---|---|---|

| 1 | Python | 25,553 | 1× |

| 2 | Java | 2,373 | 11× |

| 3 | C | 543 | 47× |

| 4 | + parallel loops | 70 | 366× |

| 5 | + divide & conquer | 3.8 | 6,727× |

| 6 | + vectorization | 1.1 | 23,224× |

| 7 | + AVX intrinsics | 0.41 | 62,806× |

Source: Leiserson et al. (2020), Table 1. Two 4096×4096 matrices, 64-bit floats.

\(O(n^3)\) is polynomial. The algorithm is “fine.”

But there’s a 60,000× gap between fine and fast.

NP-Hard ≠ Unsolvable

Your complexity course told you to give up. Let’s reconsider.

The Knapsack Problem

You have a set of items, each with a weight and a value. Your bag has a weight limit.

Goal: Pick items to maximize total value without exceeding the limit.

| Item | Weight | Value |

|---|---|---|

| Laptop | 3 kg | 10 |

| Book | 1 kg | 3 |

| Jacket | 2 kg | 5 |

| Camera | 2 kg | 7 |

| Snacks | 1 kg | 2 |

Capacity: 5 kg. What do you pick?

Best: Laptop + Camera = 17 (5 kg) ✓

This is the Knapsack Problem — and it’s NP-hard.

But you can’t just decide not to solve it. Every time you pack a truck, allocate a budget, or select features for a release — you’re solving a knapsack problem.

Brute force is not an option

Try all \(2^n\) subsets, keep the best feasible one.

50 items: \(2^{50} \approx 10^{15}\) subsets

At \(10^9\)/second → 13 days

100 items: \(2^{100} \approx 10^{30}\) subsets

At \(10^{18}\)/second → 31,000 years

NP-hard ≠ unsolvable

Real-world problems have structure — most of the search space is junk.

Smart algorithms exploit this: prune infeasible branches, bound what’s achievable, propagate constraints.

Result: well-structured problems with millions of variables solved routinely — despite exponential worst case.

Standing on the shoulders of giants

Decades of research on branch & bound, constraint propagation, and LP relaxation are packaged into battle-tested generic solvers.

You don’t need to reimplement any of it — just like a database engineer never reimplements PostgreSQL.

Declarative modelling languages let you describe what you want. The solver figures out how to search.

Think about what a database engineer does

- Choose the right system — relational, document, key-value, …

- Design the structure — schema, entities, constraints, indexes

- Trust the engine — understand internals, but only go custom when the benefit justifies the cost

Optimization works similarly. Choose the right solver, design the model well, let the engine do the search.

Declarative Modeling: the Knapsack

A model describes your problem in three parts:

- Decision variables — the choices

- Constraints — the rules

- Objective — what to optimize

You describe what — the solver figures out how.

Variables: \(x_i \in \{0, 1\}\) for each item \(i\)

Constraint: \[\sum_{i} x_i \cdot w_i \leq C\]

Objective: \[\text{Max} \quad \sum_{i} x_i \cdot v_i\]

The full code

from ortools.sat.python import cp_model # pip install -U ortools

# Parameters: 100 items with weights and importance scores

weights = [395, 658, 113, 185, 336, ...] # grams

values = [71, 15, 100, 37, 77, ...] # importance 0-100

capacity = 8000 # 8 kg carry-on limit

# Model

model = cp_model.CpModel()

xs = [model.new_bool_var(f"x_{i}") for i in range(len(weights))]

# Constraint: total weight <= capacity

model.add(sum(x * w for x, w in zip(xs, weights)) <= capacity)

# Objective: maximize total value

model.maximize(sum(x * v for x, v in zip(xs, values)))

# Solve

solver = cp_model.CpSolver()

status = solver.solve(model)

print("Packed value:", solver.objective_value)

# >>> status: OPTIMAL | objective: 2394 | walltime: 0.01sOptimal solution out of \(2^{100}\) possibilities — found in 0.01 seconds.

Change request!

Users want at most one item per type (e.g., choose heavy or light hiking boots).

That’s it. Three lines. No refactor of search logic needed.

Beyond Simple Models

The Traveling Salesman Problem

The knapsack is actually quite tame. TSP is not — you don’t get it right by accident.

- Easy to state: visit every city exactly once, minimize total distance

- One of the great reference problems in optimization

- Many techniques were first developed for TSP and then generalized

State-of-the-art solvers can handle instances with tens of thousands of cities — provably optimal. Heuristics can tackle millions.

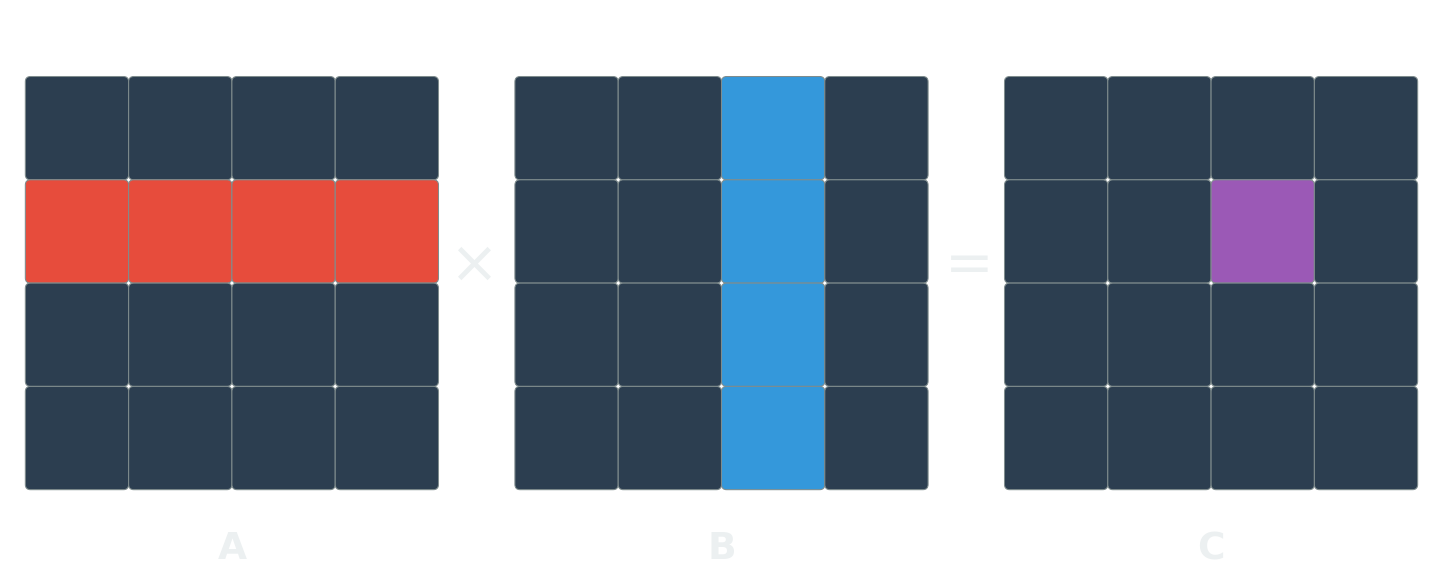

The magic ingredient: LP relaxation

NP-hard

Polynomial

This works for any linear model

MIP (NP-hard)

\[ \begin{align} \text{Max} \quad & \sum_{i} x_i \cdot v_i \\ \text{s.t.} \quad & \sum_{i} x_i \cdot w_i \leq C \\ & x_i \in \{0, 1\} \end{align} \]

LP (polynomial)

\[ \begin{align} \text{Max} \quad & \sum_{i} x_i \cdot v_i \\ \text{s.t.} \quad & \sum_{i} x_i \cdot w_i \leq C \\ & 0 \leq x_i \leq 1 \end{align} \]

Any problem with linear constraints and integer variables can be relaxed this way.

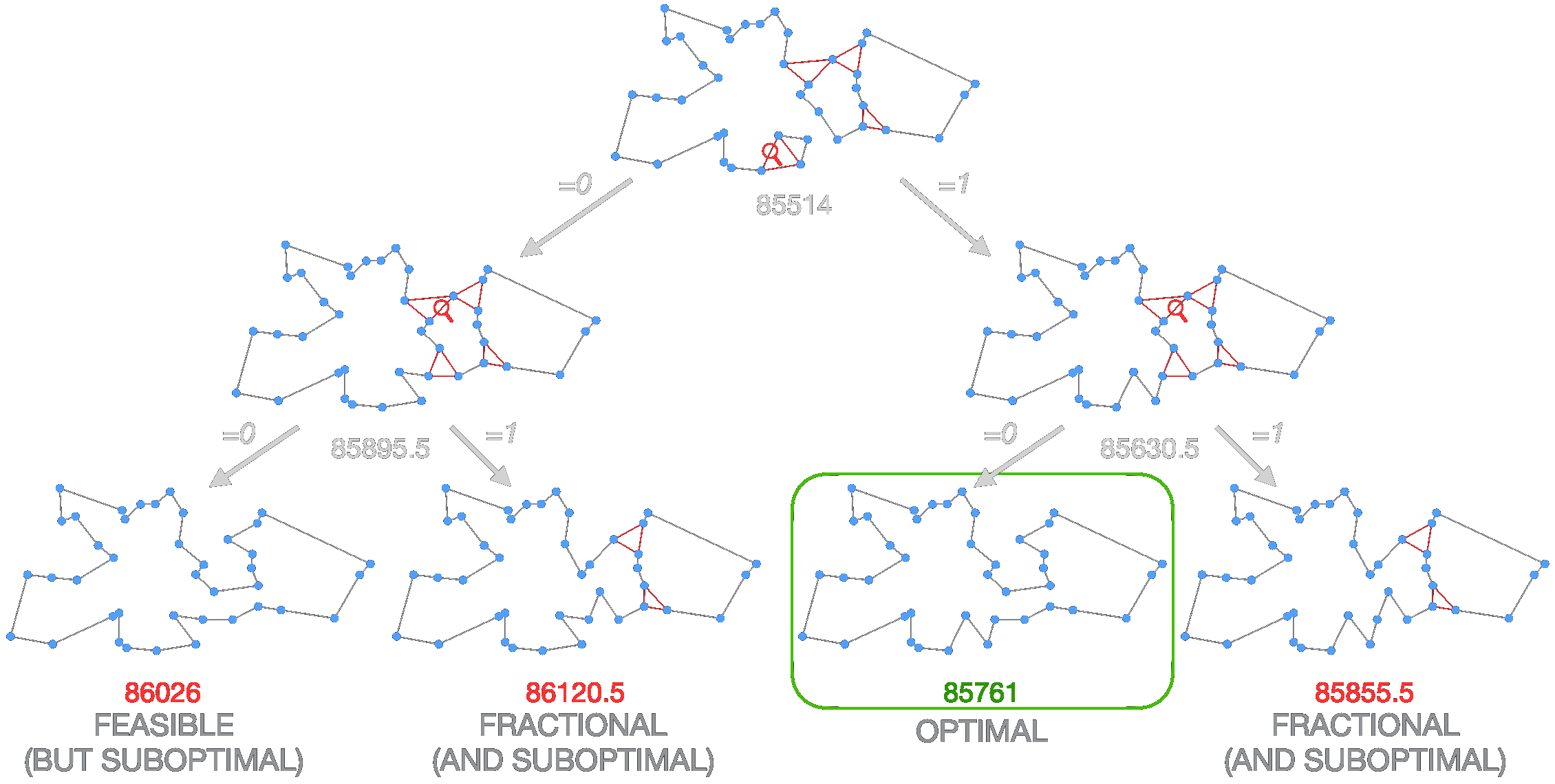

Branch & Bound — visualized

relax → bound → branch → prune

SAT: Logic problems, surprisingly fast

Not all problems are equations — some are logical implications.

\[(\neg x_1 \lor x_2) \;\land\; (x_1 \lor x_3 \lor \neg x_4) \;\land\; (\neg x_2 \lor \neg x_3)\]

Find True/False for \(x_1, \ldots, x_n\) that satisfies all clauses — or prove none exists.

Encodable as SAT:

- Nurse scheduling

- Hardware verification

- Dependency resolution

- Configuration & planning

Worst-case \(O(2^n)\), but in practice: millions of variables solved routinely.

CP-SAT: The full declarative framework

Integer & interval variables, rich constraints (all different, no overlap, cumulative), and optimization — one solver.

Example: 2D bin packing

height = model.new_int_var(0, H_max, "height")

for w, h in boxes:

x = model.new_int_var(0, W - w, f"x_{i}")

y = model.new_int_var(0, H_max - h, f"y_{i}")

x_ivls += [model.new_fixed_size_interval_var(x, w)]

y_ivls += [model.new_fixed_size_interval_var(y, h)]

model.add(y + h <= height)

model.add_no_overlap_2d(x_ivls, y_ivls)

model.minimize(height)CP-SAT combines ideas from SAT, MIP, and Constraint Programming into one solver.

Tackling Larger Instances

What do you do when one model isn’t enough?

Decomposition

Some problems mix different structures that no single solver handles well. Split them.

Example: Delivery with 3D packing constraints.

- Master: Route planning → MIP

- Subproblem: Do packages fit? → CP

- Feedback loop if packing fails

Use the right tool for each piece — easier to solve separately than together.

Metaheuristics

Your model is an abstraction — good enough is good enough.

Some of the most effective metaheuristics use exact solvers as building blocks.

Large Neighborhood Search:

- Pick a region of the solution

- Destroy it (remove 20% of stops)

- Repair it optimally (MIP, CP, …)

- Repeat

The Real World

So we have all these powerful tools. Model your problem, hit solve, go home. Right?

…Not quite.

Your perfect solution meets reality

You optimized the delivery route. Minimal cost, minimal time. Beautiful.

One traffic jam and the whole plan falls apart.

If your solution is brittle and you can’t recover quickly, it’s not a production system.

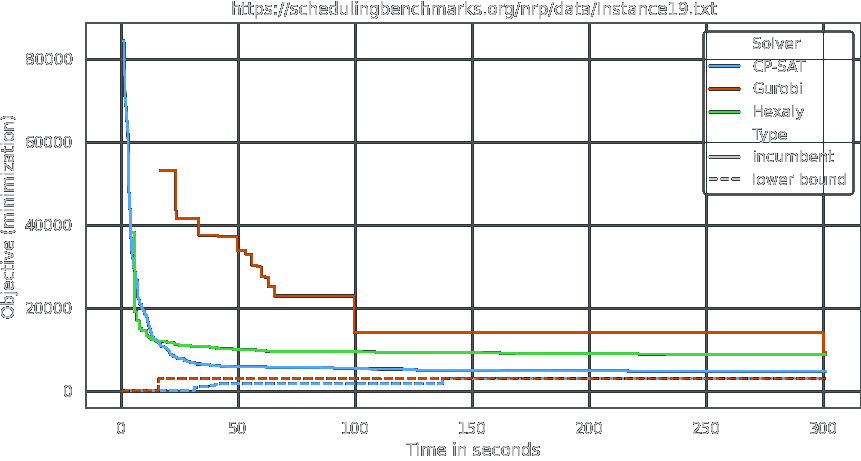

Benchmarking is harder than you think

Which solver is best for this nurse scheduling problem?

It depends on when you stop the clock — and which instance you look at. There is no single “fastest” solver.

Your optimization code has special needs

- A wrong constraint doesn’t crash — the solver quietly returns a worse solution, and you never notice

- Requirements change constantly — without clean structure, you rewrite from scratch

We’ll teach patterns to write optimization code that is readable, testable, and maintainable.

The Bigger Picture

It’s not just about the algorithm

Planning a delivery route is not “just solving TSP.”

- Data collection, demand forecasting, driver constraints, customer preferences, fleet management

- The algorithm is one piece of a complex decision process

- Decisions unfold over time — new information arrives, plans must adapt

You need to become a problem solver, not just an algorithm engineer.

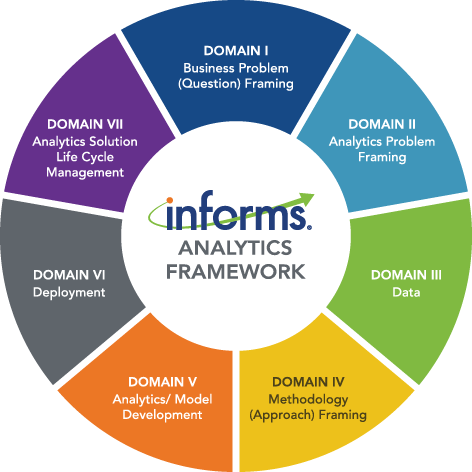

The INFORMS Analytics Framework

The algorithm lives in Domain V — but it only works if the other six domains are right too.

GenAI is changing the economics

Many problems are “not worth optimizing” — not because the math is too hard, but because the expert is too expensive.

- GenAI makes the expert faster — not by replacing the solver, but by accelerating modeling and iteration

- The data infrastructure built for AI also feeds optimization

- more digital processes → more problems you can optimize

Lower cost + better data → more problems become viable → more demand, not less.

What you’ll be able to do

By the end of this course, you will be a problem solver —

with a toolkit to efficiently and effectively tackle optimization challenges,

even when they are NP-hard and others give up.

Recommended reading

References

Algorithm Engineering SS 2026 — L01 Overview